| Author | Affiliation |

|---|---|

| James E. Colletti, MD | Mayo Clinic, Department of Emergency Medicine, Rochester, Minnesota |

| Thomas J. Flottemesch, PhD | Regions Hospital, Department of Emergency Medicine, St Paul, Minnesota |

| Tara O’Connell, MD | Hoag Hospital, Newport Beach, California |

| Felix K Ankel, MD | Regions Hospital, Department of Emergency Medicine, St Paul, Minnesota |

| Brent R Asplin, MD | Fairview Medical Group, St Paul, Minnesota |

ABSTRACT

Introduction:

Teaching ability and efficiency of clinical operations are important aspects of physician performance. In order to promote excellence in education and clinical efficiency, it would be important to determine physician qualities that contribute to both. We sought to evaluate the relationship between teaching performance and patient throughput times.

Methods:

The setting is an urban, academic emergency department with an annual census of 65,000 patient visits. Previous analysis of an 18-question emergency medicine faculty survey at this institution identified 5 prevailing domains of faculty instructional performance. The 5 statistically significant domains identified were: Competency and Professionalism, Commitment to Knowledge and Instruction, Inclusion and Interaction, Patient Focus, and Openness and Enthusiasm. We fit a multivariate, random effects model using each of the 5 instructional domains for emergency medicine faculty as independent predictors and throughput time (in minutes) as the continuous outcome. Faculty that were absent for any portion of the research period were excluded as were patient encounters without direct resident involvement.

Results:

Two of the 5 instructional domains were found to significantly correlate with a change in patient treatment times within both datasets. The greater a physician’s Commitment to Knowledge and Instruction, the longer their throughput time, with each interval increase on the domain scale associated with a 7.38-minute increase in throughput time (90% confidence interval [CI]: 1.89 to 12.88 minutes). Conversely, increased Openness and Enthusiasm was associated with a 4.45-minute decrease in throughput (90% CI: −8.83 to −0.07 minutes).

Conclusion:

Some aspects of teaching aptitude are associated with increased throughput times (Openness and Enthusiasm), while others are associated with decreased throughput times (Commitment to Knowledge and Instruction). Our findings suggest that a tradeoff may exist between operational and instructional performance.

INTRODUCTION

Across the United States attending physicians prepare emergency medicine (EM) residents to care for millions of patient encounters each year.1 There are multiple time demands placed on the attending physician while running an emergency department (ED). Attending physicians are presented with the critical task of teaching future emergency physicians the medical knowledge and skills needed to successfully care for patients of varying ages, medical conditions, and socioeconomic backgrounds. Unlike a traditional classroom, attending physicians must master the skill of teaching while simultaneously moving patients safely through the ED.

To date, there have been few investigations evaluating the association between the quality of EM physician teaching and clinical efficiency. A crucial first step in the promotion of excellence in education and clinical efficiency is discovering physician qualities that contribute to both effective teaching and clinical efficiency.

The objective of this investigation was to evaluate the relationship between EM educator performance (within and across 5 education performance domains) and their operational performance (as measured by their ability to maintain patient flow in an academic ED). We hypothesized that the teaching proficiency of an EM staff physician, as viewed by EM residents, is independent of clinical productivity.

METHODS

Study Design

We retrospectively analyzed prospectively collected data from 2 sources to determine if a correlation exits between physician productivity and teaching aptitude. Approval by the local institutional review committee was obtained prior to the initiation of the investigation.

Teaching aptitude was derived from resident evaluations of staff physicians. Resident evaluations utilized the New Innovations Program Residency Management Suite (New Innovations Inc, Uniontown, Ohio). Residency Management Suite is an instrument that facilitates medical education by unifying data into a centralized data warehouse and then completing tasks through a common interface. The authors have no financial relationship with New Innovations.

Physician clinical performance was defined as the median throughput time for all patients treated by that physician. Data for throughput time were abstracted from the Epic Systems Corporation (Verona, Wisconsin) electronic medical record (EMR) system.

Study Setting and Population

This study was undertaken at an urban, academic, level-1 trauma center (Regions Hospital, St Paul, Minnesota). The annual ED census at the study site is 65,000 patient visits, with a 21% hospital admission rate and 2,500 trauma admissions per year.

The Regions Hospital EM program is a year 1 through 3 training program with 9 residents per year for a total of 27 residents. Residents are asked to complete an annual 18-item survey for each faculty member to evaluate instructional performance.

The 18-item survey was originally developed and administered as a faculty evaluation instrument. When it was first developed, the survey’s questions were intended to identify various attributes of teaching aptitude based the Accreditation Council for Graduate Medical Education (ACGME) core competencies. Questions were intended to represent an individual area of the ACGME core competencies (patient care, medical knowledge, practice-based learning and improvement, interpersonal and communication skill, professionalism, and systems-based practice).

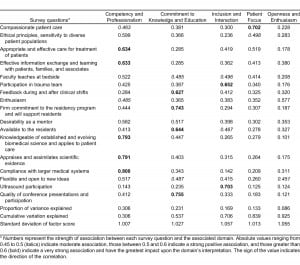

The 18-item electronic survey for all faculty cohort was administered to residents in late fall twice over a 2-year period (2004–2005). Residents had over 2 weeks to fill out the survey. Using 2004 data, 5 domains of instructional quality were derived (Competency and Professionalism, Commitment to Knowledge and Instruction, Inclusion and Interaction, Patient Focus, and Openness and Enthusiasm) and then validated using 2005 data.2

Complete data were available for 24 faculty members from 2004 and 29 faculty members for 2005 and 2006. Throughput data from the ED operations warehouse were collected for the final year, 2006.3

Study Protocol

Faculty performance data were collected in the following manner. Residents at all levels of training were asked to evaluate the teaching performance of EM faculty with an online survey (Table 1) (New Innovations Inc). This 18-question survey identifies various attributes of teaching aptitude based on ACGME core competencies. Respondents score each item using a 9-point Likert scale adapted from the American Board of Internal Medicine mini-clinical evaluation exercise.4 Responses were then assigned the following meaning: 1 through 3 indicate “below expectations”; 4 through 6 indicate “meets expectations”; and 7 through 9 indicate “exceeds expectations.” New Innovations survey software (New Innovations Inc) computed descriptive statistics (max, min, mean, median, standard deviation) for each faculty member. Three sets of survey data were collected corresponding to the years 2004, 2005, and 2006, respectively.

In a prior investigation we identified independent domains of teaching aptitude through maximum-likelihood factor analysis (see Data Analysis section for details). Five domains of instructional performance were identified: (1) Competency and Professionalism, (2) Commitment to Knowledge and Instruction, (3) Resident Inclusion and Interaction, (4) Patient Focus, and (5) Openness and Enthusiasm.2

Patient-level throughput data were gathered from the Regions Hospital ED operations warehouse. The ED operations warehouse is a structured query language database developed at the Regions Hospital ED to assist in patient tracking and measuring operational performance. The database is populated by event level data from the Regions Hospital EMR (EPIC system). In the EMR a patient encounter begins as soon as the patient enters the ED and requests care. The encounter ends when the system records a final ED disposition. The ED operations warehouse defines throughput as the difference between these 2 timestamps. Of the approximately 65,000 patient encounters tracked in the ED operations warehouse, those without direct resident involvement were excluded. This resulted in 38,526 patient encounters in the final dataset used to compare physician teaching and throughput performance.

Data Analysis

Analysis proceeded in 2 phases. The first phase used data from physician teaching performance surveys completed by the EM residents. These data were separated by year (2004, 2005, and 2006). Using the 2004 survey data, an exploratory maximum-likelihood factor analysis employing a Varimax rotation identified potential, independent domains of EM faculty performance. Two confirmatory factor analyses using the data from 2005 and 2006 were conducted to confirm the consistency of the latent structure (eg, performance domains) identified in the 2004 data. The consistency of the performance within a given instructional domain over time was confirmed using Cronbach alpha at the physician level.

The second phase of the analysis used a multivariate, random effects model with gamma-distributed errors to compare EM faculty member’s instructional and operational performance. The 38,526 patient-level encounters were randomly assigned to either the estimation or validation datasets. The estimation dataset was used to develop the model, the validation dataset to protect against over fitting. In this model, patient encounters were nested with EM faculty. Construction of the models used the estimation dataset and proceeded in a bottom-up fashion. First, the possibility of significant variation at the physician level (eg, a significant random effect) was examined. Then, a baseline model using patient-level confounding factors such as age, gender, time/day of presentation, and acuity as measures by emergency severity index scale was developed. All potential confounders were screened prior to inclusion. Those significant at the 10% level in a univariate model were retained in the final multivariate model. Using the final patient-level baseline model, the relation in performance along each educational domain was explored in a series of separate models. Finally, a multivariate model simultaneously including all 5 domain scores was estimated to determine which domain effects were dominant. The validation datasets were used to confirm the findings.

RESULTS

Exploratory factor analysis of the 2004 data revealed 5 latent constructs (eg, educational performance domains) that explained 92.5% (χ2 = 2.33, P = 0.11) of the variation in the data. Factor analysis of the 2005 and 2006 resident surveys confirmed the validity of these constructs; they explained 89.6% and 90.5% of the data’s variations, respectively (χ2 = 1.89, P = 0.25). The 5 instructional domains were (1) Competency and Professionalism (30% of variation explained), (2) Commitment to Knowledge and Instruction (17% of variation explained), (3) Inclusion and Interaction (17% of variation explained), (4) Patient Focus (13% of variation explained), and (5) Openness and Enthusiasm (9% of variation explained).2 Table 2 presents the factor loadings and proportion of variance explained using the 2004 data. Performance across the instructional domains appeared consistent across years per Cronbach alpha at the physician level (0.675–0.752). The items contributing most to developing Competency and Professionalism were compliance with the medical system (0.8), knowledge and application of science (0.79), appraisal of scientific evidence (0.79), appropriate and effective care (0.63), and effective information exchange (0.63). Items contributing to Commitment to Knowledge and Instruction were conference presentations and participation (0.75), commitment to residency program (0.74), availability (0.64), and feedback (0.62). Items contributing to Inclusion and Interaction were ultrasound participation (0.70) and trauma team participation (0.65). Patient Focus was mainly determined by compassionate patient care (0.70), with appropriate and effective treatment (0.52) also contributing. The final factor, Openness and Enthusiasm, has no strong contributors, with only enthusiasm (0.57) and flexibility (0.45) contributing (Table 3).

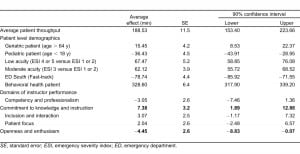

Prior to inclusion in these models physician scores across all 5 educational domains were centered at 0. Standard errors (SE) are listed at the bottom of Table 2. Table 4 contains the results from the final multivariate model incorporating all of the educational domains and patient-level confounders. These results were estimated using the validation dataset. The average patient throughput time at the study site was 188.53 minutes (SE 11.5). In addition to hour of arrival the following patient-level confounders were found to significantly impact patient flow: age ≥ 65 years (15.45 minutes, SE 4.2), age < 18 years (−36.43 minutes, SE 4.5), low acuity (67 minutes, SE 5.2), moderate acuity (62 minutes, SE 3.9), fast track (−79 minutes, SE 4.4), and behavioral health (328 minutes, SE 6.4).

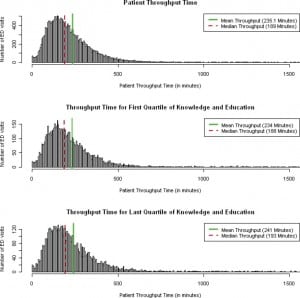

All 5 of the educational domains were significantly related to patient throughput times when included independently in multivariate models adjusting for patient-level factors only. Competency and Professionalism (−5.3, SE 2.1) and Openness and Enthusiasm (−8.2, SE 1.9) were associated with decreased throughput times (eg, improved patient flow). In contrast, Commitment to Knowledge and Instruction (11.5, SE 2.8), Inclusion and Interaction (6.3, SE 2.4), and Patient Focus (4.5, SE 2.1) were associated with increased throughput times. When simultaneously incorporated into a single multivariate model, the directionality of all 5 educational domains was consistent, but only 2 remained significant at the α = 0.1 level. Commitment to Knowledge and Instruction was associated with increased throughput time (7.38, SE 3.2) while Openness and Enthusiasm was associated with decreased throughput time (−4.45, SE 2.6). From this final model, 2 statements can be made regarding the interrelated nature of instructional performance and patient flow. For the domain Commitment to Knowledge and Instruction each standard deviation (1.027) increase in the domain was associated with a 7.59-minute increase in patient throughput time (90% confidence interval [CI]: 1.94 to 13.23 minutes). For the domain Openness and Enthusiasm each standard deviation (1.06) increase was associated with a 4.69-minute decrease in patient throughput time (90% CI: −9.31 to −0.074 minutes). A histogram of patient throughput time can be seen in the Figure.

DISCUSSION

Teaching residents is an important aspect of academic medicine. Clinical teaching in the ED has a significant impact on medical knowledge, professionalism, medical decision making, procedural skills, and communication.5–9

The relationship between faculty and resident is a mentoring one. The “Osler” model of residency training suggests that staff physicians are not merely distant figures but are actively involved in instructing residents while caring for patients.10 William Osler stated “the art of medicine is an observation, as the old motto goes, but to educate the eye to see, the ear to hear, and the finger to feel takes time, and to make a beginning to start a man on the right path is all that we can do.”11 When time with the learner is hurried the end result is often not quite what the mentor had planned.12

Clinical education of residents is a priority for both academic departments of EM and the ACGME. The following language is included in the ACGME statement on duty hours, “Didactic and clinical education must have priority in the allotment of residents’ time and energy.”13 In the same document the ACGME goes on to say, “The program must ensure that qualified faculty provide appropriate supervision of residents in patient care activities.”13

Many factors compete with faculty time for education. We specifically evaluated the relationship between clinical productivity and time spent instructing and mentoring residents. Throughput time is an important component of the much larger healthcare issue of over-crowding facing EM in the United States.14,15

The escalation of crowding in EDs across the United States will likely result in increasing pressure placed on faculty to improve patient throughput time and may further deter faculty time away from resident instruction. More than ever, the clinician educator must balance the needs of the learner with the larger issues of patient care. In truth this is only one piece of a large puzzle. Faculty not only have to balance resident education, clinical productivity, and issues of the nation’s healthcare safety net such as crowding, but also provide sufficient documentation to meet the Centers for Medicare and Medicaid services guidelines for evaluation and management coding that emergency practitioners face regardless of practice setting.16–18

Investigations by several authors have indicated that staff physicians feel that the demands of increasing clinical productivity and documentation directly inhibit teaching success.17–20 The results of a survey by McLean and Feldman17 indicate clinical documentation demands are associated with a decrease in teaching time. Fields and colleagues18 expressed a similar concern (regarding the demands of documentation) and commented that the medical curricula were at risk. Bandiera et al19 state “frequent interruptions and competing demands are perceived as detrimental to effective teaching” in the ED. The authors further note “during busy ED shifts, with patient waiting times measured in hours, dedicated teaching time is hard to find.” Berger et al20 remarked that 96% of the faculty at a large teaching institution believed that the time demand for clinical productivity was the largest limiting factor in being able to effectively teach students. However, the authors found no relationship between staff productivity and medical student teaching evaluations.20

The above investigations did not specifically evaluate the association between the quality of physician teaching and clinical efficiency. We sought to evaluate the relationship between teaching performance (measured by instructional domains identified within teaching evaluations) and physician throughput time. Our results suggest that certain aspects of teaching aptitude are associated with patient flow. We found that the instructional performance domain Commitment to Knowledge and Instruction was associated with a significant increase in throughput time. Conversely, the instructional performance domain of Openness and Enthusiasm was associated with decreased throughput time.

Overall, academic contribution, educational quality, and operational performance are aspects of EM physician performance. EM faculty must impart knowledge while simultaneously moving patients safely and efficiently through the ED. We observed that instructional performance was significantly correlated with operational performance in that all 5 domains in the study correlated with patient throughput times in separate models. As demands for physicians’ time increase, it is important to understand the relationship between competing demands facing academic faculty. Perhaps the most important findings in this investigation involve identifying faculty attributes that most affect the potential to successfully educate and mentor residents as well as efficiently maintain patient flow within an ED. Defining faculty attributes that are mutually beneficial to both education and clinical productivity are important as the pressure increases to do both well. Of the 5 educational performance domains, Openness and Enthusiasm was mutually beneficial to education and clinical productivity.

LIMITATIONS

Our study has several limitations. First, we chose a method of faculty evaluation that residents perform on an annual basis. Annual evaluations may not accurately depict daily learning interactions. Second, ED-related factors such as faculty and resident patient load and level of resident training (the amount of time a faculty spent teaching and supervising a first year resident versus a more senior resident) were not controlled for. Therefore, the effect these variables may have had on teaching performance or clinical productivity is unknown. Third, third-party independent observation of teaching encounters was not performed. As such, there is not a benchmark to compare the teaching interactions of faculty with residents, students, and other midlevel providers (physician’s assistants and sexual assault nurse examiners) on a given shift. Nor was there a benchmark to determine the effect that midlevel providers and students had on clinical efficiency. Furthermore, the effect that an individual faculty member’s personality had on their evaluation is unknown. Fourth, faculty behaviors may not have been static and independent of context. For example, in low departmental demand states, faculty may have spent more time with residents showing a higher Commitment to Knowledge and Instruction. Conversely, in high departmental demand states, faculty may have exhibited behavior more consistent with Openness and Enthusiasm. Fifth, this investigation was performed at a single institution. An investigation with multiple clinical sites would need to be undertaken in order to increase the generalizability of the study’s findings. Sixth, as is common among academic institutions, there is some heterogeneity in shift distribution and number of clinical hours faculty members work.

CONCLUSION

Faculty performance in specific domains of instructional quality has significant but varied associations with patient throughput time. Some aspects of teaching aptitude appear to improve throughput time (Openness and Enthusiasm) while other aspects appear to hinder throughput time (Commitment to Knowledge and Instruction). Our findings suggest that a tradeoff may exist between operational performance and certain areas of instructional performance.

Footnotes

Supervising Section Editor: Douglas S. Ander, MD

Submission history: Submitted June 27, 2011; Revision received September 15, 2011; Accepted October 3, 2011

Reprints available through open access at http://escholarship.org/uc/uciem_westjem

DOI: 10.5811/westjem.2011.10.6842

Address for Correspondence: James E. Colletti, MD

Mayo Clinic, Department of Emergency Medicine, 200 First St SW, Rochester, MN 55905

E-mail: colletti.james@mayo.edu

Conflicts of Interest: By the WestJEM article submission agreement, all authors are required to disclose all affiliations, funding, sources, and financial or management relationships that could be perceived as potential sources of bias. The authors disclosed none.

REFERENCES

1. Centers for Disease Control (CDC)/National Center for Health Statistics. Annual number of visits to hospital emergency departments: United States, 2004. CDC Web site. Available at:http://www.cdc.gov/nchs/data/ahcd/ed19922004trend.pdf. Accessed June 15, 2010.

2. Colletti JE, Flottemesch TJ, O’Connell TA, et al. Developing a standardized faculty evaluation in an emergency medicine residency. J Emerg Med. 2010;39:662–668. [PubMed]

3. Gordon BD, Asplin BR. Using online analytical processing to manage emergency department operations. Acad Emerg Med. 2004;11:1206–1212. [PubMed]

4. American Board of Internal Medicine. Mini-CEX. ABIM Web site. Available at:http://www.abim.org/program-directors-administrators/assessment-tools/mini-cex.aspx. Accessed November 30, 2011.

5. Burdick WP, Schoffstall J. Observation of emergency medicine residents at the bedside: how often does it happen? Acad Emerg Med. 1995;2:909–913. [PubMed]

6. Griffith CH, 3rd, Wilson JF, Haist SA, et al. Relationships of how well attending physicians teach to their students’ performance and residency choices. Acad Med. 1997;72((suppl 10)):S118–120. [PubMed]

7. Burdick WP, Jouriles NJ, D’Onofrio G, et al. Emergency medicine in undergraduate education. Acad Emerg Med. 1998;5:1105–1110. [PubMed]

8. Stern DT, Williams BC, Gill A, Gruppen LD, et al. Is there a relationship between attending physicians’ and residents’ teaching skills and students’ examination scores? Acad Med. 2000;75:1144–1146. [PubMed]

9. Kelly SP, Shapiro N, Woodruff M, et al. The effects of clinical workload on teaching in the emergency department. Acad Emerg Med. 2007;14:526–531. [PubMed]

10. Franzese CB, Stringer SP. The evolution of surgical training: perspectives on educational models from the past to the future. Otolaryngol Clin North Am. 2007;40:1227–1235. [PubMed]

11. Osler W. On the need of radical reform in our methods of teaching senior students. Med News.1903;82:49–53.

12. Dunnington GL. The art of mentoring. Am J Surg. 1996;171:604–607. [PubMed]

13. Accreditation Council for Graduate Medical Education. 2009 Resident duty hours language. ACGME Web site. Available at: http://www.acgme.org/acWebsite/dutyHours/dh_Lang703.pdf. Accessed June 16.

14. Asplin BR, Magid DJ, Rhodes KV, et al. A conceptual model of emergency department crowding.Ann Emerg Med. 2003;42:173–180. [PubMed]

15. Solberg LI, Asplin BR, Weinick RM, et al. Emergency department crowding: consensus development of potential measures. Ann Emerg Med. 2003;42:824–834. [PubMed]

16. Bernstein SL, Asplin BR. Emergency department crowding: old problem, new solutions. Emerg Med Clin North Am. 2006;24:821–837. [PubMed]

17. McLean SA, Feldman JA. The impact of changes in HCFA documentation requirements on academic emergency medicine: results of a physician survey. Acad Emerg Med. 2001;8:880–885.[PubMed]

18. Fields SA, Morrison E, Yoder E, et al. Clerkship directors’ perceptions of the impact of HCFA documentation guidelines. Acad Med. 2002;77:543–546. [PubMed]

19. Bandiera G, Lee S, Tiberius R. Creating effective learning in today’s emergency departments: how accomplished teachers get it done. Ann Emerg Med. 2005;45:253–261. [PubMed]

20. Berger TJ, Ander DS, Terrell ML, et al. The impact of the demand for clinical productivity on student teaching in academic emergency departments. Acad Emerg Med. 2004;11:1364–1367. [PubMed]